So let us now continue study SRS. Recall in part 3, we introduced the idea of unit inclusion probability.

![]()

Subsequently, we introduced ![]() , which is the sampling weight.

, which is the sampling weight.

![]()

The sampling weight of unit i can be interpreted as the number of population units represented by unit i. It is the reciprocal of the inclusion probability after all. And in SRS, each unit has inclusion probability, consequently, all sampling weights are the same. Intuitively, every unit the sample represent itself plus ![]() of the units in the population that were NOT sampled. Thus for an SRS,

of the units in the population that were NOT sampled. Thus for an SRS, ![]() .

.

Since all weight are the same in an SRS, we can also consider our sample as a self-weighting sample, where every unit has the same sampling weight.

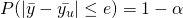

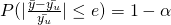

Next, we look at confidence intervals. For those with only A’levels H2 Mathematics knowledge, this is just the reverse of level of significance. Now in statistical analysis, we often start with point estimates (thats what we found previously) and then we measure the accuracy of the estimates, with MSE as introduced. But confidence intervals (CI) is a much convenient approach. An intuitive idea is that we first establish a ![]() confidence interval. If we take samples from our population over and over again, and construct a confidence interval using our procedure for each possible sample, we expect

confidence interval. If we take samples from our population over and over again, and construct a confidence interval using our procedure for each possible sample, we expect ![]() of the resulting intervals to include the true value of the population parameter.

of the resulting intervals to include the true value of the population parameter.

In probability sampling from a finite population, only a finite number of possible samples exist (Recall ![]() ) and we know the probability with which each will be chosen. So if we were able to generate all possible samples from the population, we would be able to calculate the exact confidence level required.

) and we know the probability with which each will be chosen. So if we were able to generate all possible samples from the population, we would be able to calculate the exact confidence level required.

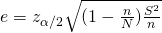

In practice, we may not know the values of all possible samples. We consider normal approximation, if n, N, and N – n are sufficiently large (![]() ), then

), then ![]()

Recall that ![]() , since in reality, we often don’t have

, since in reality, we often don’t have ![]() , we replace

, we replace ![]() by

by ![]()

Thus, an approximate ![]() CI for the population mean is

CI for the population mean is

![]() ,

, ![]()

or

![]() ,

, ![]() .

.

where ![]() is the

is the ![]() percentile of the standard normal distribution.

percentile of the standard normal distribution.

Now we consider approximate CI for proportion and the rules of thumb is that BOTH ![]() , so

, so

![]() ,

, ![]()

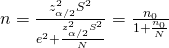

The last thing for today is to find out how to estimate the sample size, and of course, what is a “correct” or proper sample size. We remind ourselves that we must balance between the precision of the estimates and the cost of the survey. From part 4, we recall that it is the absolute size of the sample that matters, not the proportion of the population, and the FPC has little effect on the variance of estimates in large populations. The following describes the steps to estimate the sample size:

- Specify the tolerable error (confidence level

and margin of error

and margin of error  ).

). - Find an equation relating sample size n and the margin of error.

- Estimate any unknown quantities and solve for n.

- Evaluate and adjust by repeating the above. This is to ensure you don’t find a sample size that is much larger than you can afford, and adjust some of our expectations.

The tolerable error can be studied from two different perspective, based on what we want to tweak.

- Absolute Precision

Here, confidence level is usually set at 0.05 and e is simple half-width of the confidence interval.

is usually set at 0.05 and e is simple half-width of the confidence interval.

, where

, where  is the sample size for SRS with replacement.

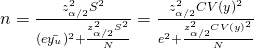

is the sample size for SRS with replacement. - Relative Precision

Instead of adjusting the absolute error, we can also control the CV to achieve a desired relative precision. The idea here is to substitute with e.

with e.

, which is solely determined by CV.

, which is solely determined by CV.

So we should realise by now that CV or ![]() must be estimated. Occasionally, we are limited by our data and can’t estimate S. So we make an intelligent guess.

must be estimated. Occasionally, we are limited by our data and can’t estimate S. So we make an intelligent guess.

- If you believe the population is normally distributed, then S can be roughly estimated by some range/4 or some other range/6. This is because approximately, 95% of values form a normal population are within 2 standard deviations of the mean, and 99.7% of the values are within 3 standard deviations of the mean.

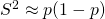

- For large population,

. and clearly the maximum value of

. and clearly the maximum value of  . And we can use this value if we have no information on p.

. And we can use this value if we have no information on p.

Most surveys in which a proportion is measure, ![]() and

and ![]() . And for large population, an even rough estimate at 95% CI, is

. And for large population, an even rough estimate at 95% CI, is ![]() or

or ![]()

In general, the larger the sample, the smaller the sampling error.

Sampling & Survey #1 – Introduction

Sampling & Survey #2 – Simple Probability Samples

Sampling & Survey #3 – Simple Random Sampling

Sampling & Survey #4 – Qualities of estimator in SRS

Sampling & Survey #5 – Sampling weight, Confidence Interval and sample size in SRS

Sampling & Survey #6 – Systematic Sampling

Sampling & Survey #7 – Stratified Sampling

Sampling & Survey # 8 – Ratio Estimation

Sampling & Survey # 9 – Regression Estimation

Sampling & Survey #10 – Cluster Sampling

Sampling & Survey #11 – Two – Stage Cluster Sampling

Sampling & Survey #12 – Sampling with unequal probabilities (Part 1)

Sampling & Survey #13 – Sampling with unequal probabilities (Part 2)

Sampling & Survey #14 – Nonresponse

[…] #3 – Simple Random Sampling Sampling & Survey #4 – Qualities of estimator in SRS Sampling & Survey #5 – Sampling weight, Confidence Interval and sample size in SRS Sampling & Survey #6 – Systematic Sampling Sampling & Survey #7 – Stratified […]